The great filter, as described by Robin Hanson:

Humanity seems to have a bright future, i.e., a non-trivial chance of expanding to fill the universe with lasting life. But the fact that space near us seems dead now tells us that any given piece of dead matter faces an astronomically low chance of begating such a future. There thus exists a great filter between death and expanding lasting life, and humanity faces the ominous question: how far along this filter are we?

I will argue that we are not far along at all. Even if the steps of the filter we have already passed look about as hard as those ahead of us, most of the filter is probably ahead. Our bright future is an illusion; we await filtering. This is the implication of applying the self indication assumption (SIA) to the great filter scenario, so before I explain the argument, let me briefly explain SIA.

SIA says that if you are wondering which world you are in, rather than just wondering which world exists, you should update on your own existence by weighting possible worlds as more likely the more observers they contain. For instance if you were born of an experiment where the flip of a fair coin determined whether one (tails) or two (heads) people were created, and all you know is that and that you exist, SIA says heads was twice as likely as tails. This is contentious; many people think in such a situation you should think heads and tails equally likely. A popular result of SIA is that it perfectly protects us from the doomsday argument. So now I’ll show you we are doomed anyway with SIA.

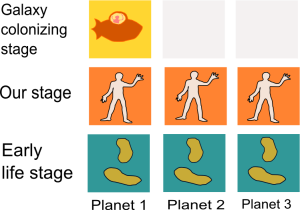

Consider the diagrams below. The first one is just an example with one possible world so you can see clearly what all the boxes mean in the second diagram which compares worlds. In a possible world there are three planets and three stages of life. Each planet starts at the bottom and moves up, usually until it reaches the filter. This is where most of the planets become dead, signified by grey boxes. In the example diagram the filter is after our stage. The small number of planets and stages and the concentration of the filter is for simplicity; in reality the filter needn’t be only one unlikely step, and there are many planets and many phases of existence between dead matter and galaxy colonizing civilization. None of these things are important to this argument.

.

.

The second diagram shows three possible worlds where the filter is in different places. In every case one planet reaches the last stage in this model – this is to signify a small chance of reaching the last step, because we don’t see anyone out there, but have no reason to think it impossible. In the diagram, we are in the middle stage, earthbound technological civilization say. Assume the various places we think the filter could be are equally likely..

.

This is how to reason about your location using SIA:

- The three worlds begin equally likely.

- Update on your own existence using SIA by multiplying the likelihood of worlds by their their population. Now the likelihood ratio of the worlds is 3:5:7

- Update on knowing you are in the middle stage. New likelihood ratio: 1:1:3. Of course if we began with an accurate number of planets in each possible world, the 3 would be humungous and we would be much more likely in an unfiltered world.

Therefore we are much more likely to be in worlds where the filter is ahead than behind.

—-

Added: I wrote a thesis on this too.

Related: Sleeping Beauty Problem. You appear to be a “thirder”.

Here is a nice variant of the problem for “thirders”.

Yes, SIA corresponds to ‘thirding’ on sleeping beauty.

This is sophistry, Katja. Like the DA it relies on a) a huge number of overconfident assumptions about things we don’t know (eg why should the three worlds begin equally likely? We know of no examples of the second one…) and b) ignoring the knowledge we do have, like the numerous ways we know species can go extinct, the different selection pressures on them, our knowledge of system-wide catastrophes, etc.

Such reasoning might show that the data we have has different implications than we thought, but it doesn’t just overwrite the data and reset our expectations.

As I said, this is a simplified model to make it easy to understand. If you modify the probabilities for different places the filter could be based on knowledge we have of how species go extinct etc, you will get a similar result for a good deal of modifying.

Can you drill down to a real world example where this kind of reasoning is more useful than data? I’m skeptical that you’ll find a convincing one.

Incidentally, that ‘nice variant’ link is a friend of mine’s website. Such small world moments please me :)

If the question is why our planet wasn’t already colonized by spacefaring aliens, given so many suitable planets in the galaxy for intellegence like ours evolve on, then the explanation is some combination of:

0. suitable planets are rarer than you guessed

1. it takes a lot more luck to get interesting replicators (DNA or similar) started than you guessed.

2. generally intelligent brains like ours are rarely reached and/or rewarded by those replicators’ evolution

3. the technological (or peacemaking) abilities of beings like us are more limited than you guessed

1-3 seem to be the filters you’re referring to.

Probably either no long-lived interstellar colonizer has existed in our galaxy, or it’s rather easier to completely extinct an interstellar civilization than I’d imagine, or we’re (possibly) about to become the first one (or many have been in the same position but failed the same #3 filter we’re about to). So, how surprised should I be based on my beliefs about 0-2?

It feels like a horrible mistake to me to propose a generative process for “universes like ours” and to believe that we’ll be randomly assigned to one of the observer-slots in it (which I think is exactly what is required for anthropic reasoning and SIA specifically to be completely trustworthy). Any process I propose by which I assume our universe to be generated (yours seems like: with uniform probability, generate one of these 3 diagrams) is, in my opinion, based on crazy thinking, and I wouldn’t place any trust in the inference that results.

Nonetheless, it is really cool to think about anthropic reasoning. I agree with the conclusions you draw given your setup.

I’m not referring to particular filters.

I’m not proposing any generative process or your being assigned to slots. If you want to cross the road and think there is a 50% chance a car is coming does this also require you believe in a process that generates roads with or without cars and assigns you to slots? (one in each world in this case)

Fine, you weren’t interested in specifics, but 1-3 do explain why you might have a filter at each place.

I have a hard time imagining that a prior belief about the universe not corresponding to one of:

*this* universe was generated out of a distribution over all possible universes

or

a spectrum of many possible universes exist as if they were sampled from a distribution of possible universes

If you just have a feeling *for no reason* about the above distribution, I don’t follow why you do. In other words: why consider middle/late filters as equally likely a priori? In some sense it’s the best you can do, but in another it’s nonsense.

In other words, I’m questioning the trick (which you definitely do employ) of saying “I, an observer of about X intelligence, am slotted into any one of the possible observer-slots in any one of the possible universes, by first choosing a universe (and time?*) that has any observers in it with uniform probability, and then further choosing (uniformly random) one of the observer-slots in that universe in it for me to occupy*. Or if you’re not saying precisely that, you’re saying something with equivalent implications. I find this approach quite unjustified, but I’m interested in conclusions that can be drawn from it, because since it’s hard to justify any prior over universes, a more uniform one is at least less unjustifiable than alternatives.

* let’s take for granted that I have had a continuous existence since birth, so the slotting would happen at birth?

Pingback: Overcoming Bias : Very Bad News

My major objections are

a) the frame of indexicality seems confused

b) the frame of “impossible possible worlds” is obviously confused

c) arguably a sub-set of a), it’s very far from obvious that we exist early in the universe as opposed to being “in a simulation”, which is itself not as simple a concept as it sounds.

Care to elaborate on this confusion?

Interesting speculation, but I find the tradition of attempting to prove such things with equations to be strange and futile.

Katja, do you have any feelings about this result? A lot of the people who discuss doomsday arguments and anthropic reasoning also have heavy emotional investments in transhumanism, Singularity outcomes and so on, and will react to the idea that “our bright future is an illusion” with gloom, or will even be motivated to contest or dismiss the reasoning.

Or it could be that you regard this as something of an if-then exercise – *if* you start with certain premises, *then* this result follows – but don’t regard the premises themselves as particularly certain…

Hello, Katja.

I think that SIA is wrong; even so, your argument–if correct–might make an interesting reductio against SIA.

However, I think your argument has a flaw: you assume that the probability of getting past the great filter is the same in your World 1 as in your World 3. But the current evidence contradicts that assumption. If the real world is like your World 1, then we know of one instance in which the great filter has been passed; in contrast, if the real world is like your World 3, then we know of no such instance. Therefore, based on our current information, which we must apply to both World 1 and World 3, the probability of a planet getting past the great filter in World 1 is much, much, greater than in World 3. Based on our probabilities, though World 3 will have a greater number of populated planets than World 1 will have, World 1 will have many more virtually-infinite populations. In total, World 1 will have many, many more people than World 3 will have. Therefore, the effect of SIA is the opposite of what you claim.

Even if the probability of getting to galaxy colonization were equal in Worlds 1 and 3, the total number of people in that stage would swamp the number of people in the middle stage, and the effect of SIA would be virtually nil.

This kind of argument does not allow conclusions to be drawn about DOOM. It implies that progress roadblocks exist – *not* that those roadblocks represent disasters or setbacks.

Hi Katja,

Very interesting argument.

My problem with it is the assumption that extremely advanced civilizations are very rare or impossible. We have no basis to make that assumption. Giving it size, it is perfectly possible that the Universe contains a large number of highly advanced but non-communicating civilizations. Furthermore, it’s possible that the most probable destiny for a civilization like ours is to survive for a very long time. Or not. We just don’t know.

Breaking this assumption invalidates your argument, or am I missing something?

The basis is that we don’t see any. If there are some but they haven’t spread this far the same argument applies to the set of planets that began close enough that they would have spread this far under whatever scenario of success we are wondering if we can look forward to.

If you take into account that it is very unlikely that the speed of light barrier can be breached in our universe (given our current knowledge) then it seems not so unlikely that we would not see any civilizations. They are simply far away and possibly stuck to a few solar systems. Or maybe they even have no interest in galactic exploration but have not been filtered either.

Sebastian, I’m not certain yet whether I believe Katja’s original argument here, but I do believe Robin Hanson’s original formulation of The Great Filter. Astronomers have found a 12.7 billion year old planet 7200 light years away. There’s around 10^6 stars inside that distance, and 9 × 10^21 stars in the visible universe.

If 12.7 billion year old planets are evenly distributed with the frequency we’ve seen so far, and intelligent life arose at the same planetary age on each of them that it did here, we’d be within the light-cone of 4 x 10^15 extraterrestrial civilizations.

Note also Robin Hanson’s malthusian observation that even if most individuals in a highly-advanced civilization aren’t interested in galactic exploration or expansion, the few which are will quickly outnumber the others.

This sounds right, except that as far as we know, it is not possible to travel with the speed faster than the speed of light. That could make galactic exploration an impossibility.

Another possibility for why there is a lack of galactic exploration could be that planets which are hospitable to life develop life of their own, making them unhospitable to other types of life. Of course we do not know this for a fact, but it is a possibility we should not discount without empirical evidence (which is not yet forthcoming).

The argument is a valid use of Bayesian statistics, and I totally buy the mathematical analysis given your assumptions about the state of possible futures and the prior probabilities over them (that is, the probabilities we would place on the possibilities before we know we exist).

However, the analysis depends crucially on the assumption that people, once they are brought into existence, have no influence on the future path. If people can take actions that increase the chances of species survival (by creating more people, for instance) then you need to make sure that the prior probabilities imposed on the state space are consistent with the assumptions about people’s ability to improve their future.

This isn’t a problem I can solve in my head, but I suspect that many reasonable assumptions will lead to the result that simply knowing I exist will mean the future is brighter than it would be if I didn’t.

It doesn’t actually rely on people not being able to influence their path, just the assumption that you can’t influence your path more or better on average than other people in your situation.

But if every person can influence the path the same, that still imposes potentially severe restrictions on the possible distributions of population over time.

Your model allows people to look at the population and infer that the great filter must be ahead, not behind, because there is no reason to believe that a larger population increases the chance that the population will continue to grow–a standard feature of most evolutionary/epidemiological models.

Assume, for example, that there is a cataclysm that is avoidable if someone comes up with a new discovery, so the more people there are now, the more likely it is that someone will make the discovery. Alternatively, assume that the reason a population dies is simply due to attrition, because random forces lower the population enough at the same time that fertility rates are low. In that case, your bayesian reasoning alone would indicate that if I exist, the likelihood is that population will fall (because I am most likely to be born in a high-population period). However, the fact of my birth–and that it is a high-population period–taken alone, will suggest the opposite.

I am sure there are settings in which either effect can predominate, but your doomsday analysis won’t be unambigiously correct if the fact of someone’s existence can alter the timing or extent of doom.

Consider the situation where you add another 4th possible world where all civilizations reach the final stage and there is no filter at all. If that one existed, we would have the ratios as 3:5:7:9. So following your argument through, we are actually most likely to live in a universe where there is no filter at all.

The reason the filter is included is because we don’t see anyone at that level. But you are right that there is a chance of that everyone would reach that level, but we are just the first. So you could include a fourth world where everyone reaches the end, but only one is first and we are that one earlier. This has the same chance as any of the low probability ones because of the low chance of being first.

We don’t have to be first, it could just be that other worlds are so far away that we can’t see the civilizations there. (Sorry for double post, can’t delete old ones it seems)

True, but see above response to Telmo.

The Great Filter – according to its definition – doesn’t necessarily stop civilizations from eventually going galactic. It stops OR DELAYs them.

All civilizations eventually making it does not imply that there is no filter. There IS a filter – our ancestors have been being filtered out quite effectively over the last 3 billion years.

Perhaps this muddle arises from the terminology. Being “filtered” SOUNDS pretty terminal. It SOUNDS as though civilizations are permanently filtered out. It is the terminology of DOOM.

This seems really obvious to me given that we should ‘third’ in the Sleeping Beauty problem. I don’t understand any of the objections given in these comments.

In the Sleeping Beauty problem you know the probability of the coin turning up heads if you toss it. Suppose the coin is unfair. The higher the probability of heads, the closer the two answers (sleeping beauty and experimenters) become.

In this case, we don’t know the frequencist probability of a civilization at our stage to last X more years. We don’t even know if none/some/many other such civilizations exist in space-time.

The non-inclusion of the 4th world that Sebastian describes is pure speculation.

But Telmo, if you want to use SIA, then you’ve got to consider all possible worlds. Clearly a universe where there is no filter at all is conceivable. So you’ve got to include it in your calculation. And clearly, that world is always the most likely one to be in as it will always boast the largest amount of life.

It actually wouldn’t be more likely than a world where you are before the filter after you update on knowing what stage you are at – extra life that you know you aren’t doesn’t add to the likelihood.

But what does it mean to assign a probability to a single event? Take for example crossing a road. If I am about to cross a road, look and see a car coming, then there’s nearly 100% chance of a car coming (small probability of my vision being off). Equally, if I look, in the right place, and don’t see any cars, there’s a nearly 0% chance of a car coming. The 50% probability doesn’t apply.

Now let’s say I can’t look for some reason. So then what’s the probability of a car coming? It surely depends on the time of day that I am crossing the road, rush hour tends to be busier so higher probability. But what is the exact distribution of a rush hour? How about if there is a major event nearby drawing more cars in, or if road closures for this event are keeping some through traffic away? How about the weather? If it’s a nice day some car drivers might abandon their car for biking or walking. What’s the probability of an accident at this time meaning an ambulance coming through a nearby road disrupts my road’s flow through?

Without some model of what causes cars to come, assigning a probability of 50% to a car coming for a single event doesn’t make sense to me (even if we had a good historical data set).

And the same with your SIA. We don’t have any model of how these worlds are generated, and we don’t have any comprehensive datasets on the number of worlds other than our own. So how philosophically could we assign a probability to a single event?

Sorry, I was misleading by choosing a simple number. I didn’t mean there is 50% chance of a car if you don’t know, but rather if you knew some other things you might estimate it at that or any other number, and that that wouldn’t mean you thought worlds were generated at that rate necessarily.

I suppose you also think it’s philosophically impossible to assign a probability to how a coin will land, as you know so little about the important aspects of its fall that will propel it to one outcome or another?

Sebastian, I believe I expressed myself in a rather confusing way. I fully agree with you.

“The non-inclusion of the 4th world that Sebastian describes is pure speculation.”

To clarify: the non inclusion of your 4th world (no filter) by Katja is speculation. There are zero known cases of total extinction of life on a planet, so “no filter” is conceivable.

Oh, my bad. I thought you meant that my 4th world idea is pure speculation. Nevermind.

Your reasoning is good and we may soon bump into the great Filter in the sky but my optimism for humanity’s future is lifted by a less rigorous chain of thought:

The universe seems to favor life and it may be that life is necessary for some reason, perhaps in the development of descendant universes and therefore the spread of life beyond our planet may be part of a normal process.

Of course even if this is the case our local infestation might not survive, let alone flourish. However, irrational expectations help get me up in the mornings and that’s worth something.

We don’t have to be first, it could just be that other worlds are so far away that we can’t see the civilizations there.

Katja Grace – we have an unworded model of how coins fall. Although if we are talking about a single coin toss of an otherwise unknown coin – how do I know if the coin is fair?

The important, useful feature of your model is that it is empty – if you knew more about how coins fell you wouldn’t be able to rely on them to make 50% probabilities.

You don’t know it’s fair, but you don’t know which way it is unfair if so. Is this a problem?

Pingback: News Bits « H+ Lund

But it’s *not* empty. I have seen lots of coins tossed during my childhood. I know that they spin when they go up into the air, and that there’s variation in when the catcher catches them and I know that it’s supremely unlikely that a coin would land on its edge, again from that childhood experience. I can’t fully articulate all of this, but I know it, roughly.

Look at it another way, say I was tossing a dice, rather than a coin. Would you say that that dice had a 50% chance of coming up heads? Alternatively, would you say that a coin has a 1/6th chance of coming up 3? If you would predict differently for a dice versus a coin then your models of coin tossing and dice modelling are different, which means that they can’t both be empty.

And, also, what does it mean to “rely” on something? If it’s something trivial then relying on a coin toss to make a decision is fine. If a madman captured me and threatened to kill me if I didn’t come up with a distribution probability that had a true 50-50 split, to be tested using 10,000 trials and some reasonable tests for randomness, I certainly wouldn’t rely on an unknown coin with no further investigation.

Yes, I think that’s the problem. Clearly neither of us have articulated the problem perfectly, judging by our lack of agreement but I think your restatement of my problem is on the right track.

Returning to the coin, that we don’t know if it’s fair or not, and if it’s unfair, that we don’t know which way it is unfair. Now yes, we could assign a 50% probability that the coin would come up heads on the next toss. But we could also assign a 10% probability, or a 90% probability, or a 99.9999% probability. What philosophical basis is there for chosing a 50% probability of heads rather than a 99.9999% probability of heads for a single toss of an unknown coin?

Or take the other situation, we know that the coin came up heads, but we don’t know anything else about the coin or the tossing procedure. Why should we say that that coin had a 50% probability of coming up heads, rather than a 99.9999% probability? Or a 0.0001% probability?

Actually there is a good basis for that. The reason this works is that speaking from an information theoretic perspective this distribution maximizes the entropy of the state, i.e. minimizes our foreknowledge of the outcome. In other words, if you have n outcomes and you know nothing about the system, you’d better pick the maximum entropy distribution. If you know SOMETHING, but not everything about the system, then you should pick the maximum entropy distribution that still satisfies your knowledge of the system.

In other words, if you have n outcomes and you know nothing about the system, you’d better pick the maximum entropy distribution.

There’s a contradiction here. If I know nothing about the system, how do I know that I have n outcomes?

I think I see your point in the case of a coin. Chaos theory indicates that very small changes in starting points can result in major differences in outcomes, and this appears to apply to the coin generating process. Thank you, Sebastian and Katja for explaining the coin issue.

No problem. You are right about the contradiction though, I should have said that we know nothing other than all possible states of the system (like in the case of the coin).

Probability is about information, not reality.

When you say something is 50% or 90%, you also need to say how sure you think that it is 50% or 90%. That’s why in polls you hear stuff like “30% of people believe in God, 19 times out of 20”. You’re missing the latter part.

It’s important to know that when the 1 time out of 20 comes up, that all bets are off. You can think of it as an error rate.

Lets say you flip a coin 10 times, and 9 times out of ten it comes up heads. So, is this a 90% coin? Chances are good. But it could also be a rare case of a 50% coin being really lucky. After all, even for a 50% coin, with n flips there is a 1/(2^n) chance that you’ll flip all heads. As the sample size increases, the more certain you can be of what the coin bias is.

So for a sample size of 0, you can choose whatever bias you want, 50%, 90%, whatever, but you’ve essentially got a 100% chance that you’re wrong :P

Re: “When you say something is 50% or 90%, you also need to say how sure you think that it is 50% or 90%.”

No you don’t. Probability is a measure of uncertainty. You don’t have to say how uncertain you are about your uncertainty. That should be factored into the original uncertainty estimate.

Not all 50%s are the same. There is a difference between 1/2 and 1000/2000. It’s the significant digits.

If we add another sample to both, say 2/3 and 1001/2001, the change goes from 50% in both cases to 66% in the first and 50.02% in the second. That’s a pretty dramatic difference in results.

In the case of a sample size of 0, you’ve got 0/0, which means the probability is essentially N/A.

So in essence you are right. You can ‘factor in’ your uncertainty of your uncertainty by adding or removing a few zeros from the end of your probability, 50% vs 50.00% for example.

Mike, significant figures do not normally signify confidence levels.

A probability is itself a measure of uncertainty. The concept of “uncertainty about uncertainty” is not needed – that’s simply greater uncertainty. The point you are trying to make is just wrong.

Significant digits signify precision. As far as concepts go, ‘precision’ and ‘confidence’ are pretty close.

Because Tracy is skeptical of any apriori knowledge, I used a frequentist approach, which defines probability as the frequency over the sample size, ex: 1/2, 1000/2000. Expressing the probability as simply 50% results in the loss of the sample size information, which is important to the complete definition of probability as it effectively tells us the ‘uncertainty of our uncertainty’.

Even if I were to use a Bayesian approach, I would still need to assign a weight to my apriori, which serves the same function.

I’m afraid, I’m rather more skeptical about the frequentist approach. For example, let’s say you have ten thousand samples of number of cars on your local road. How do you use that to estimate a probability of a car being on the road at any one time? You could just take your full sample and work out those odds. Or you could divide your sample up into rush hours and non-rush hours, and work out odds for rush hours and non-rush hours. Or you could divide it up by hour of the night (3am and 5am are both not rush hours, but I would expect a different probability of a car being on the road there). How about if you add in the times when there’s a major event on nearby and lots of people park on your street?

How do you account for growth in traffic over time?

There are numerous probabilities you could calculate for the odds of there being a car on your road at any one point in time. What would any of them mean?

Yes, you can divide things in anyway you like, and you will get probabilities that are all different. So which one is the correct one? They all are!

Probability is about information, not reality.

It is subjective, in the sense that it is defined by how one frames the situation. As a statistician, your job is usually to take a look at all the different ways you can see a situation, and choose the frame that gives you the best odds for what you want to do.

Yes, that’s my point.

This doesn’t seem right. For example, I was told by one of my statistics teachers that during WWII, some Allied POWs did things like tossing the same coin 10,000 times to see how biased they were (they were pretty bored). Say a POW has tossed the same coin 10,000 times and said, based on a statistical analysis of the past coin tosses, that his best guess was that the next coin toss of that same coin had a 50% chance of being heads.

Now say the same POW’s best estimate is that there was a 50% chance that the Allied advance would free him before Christmas (the other 50% containing both his best estimate of odds of the Allies not getting there before Christmas and his best estimate of the odds of all the other possible outcomes, like the POW dying first, or the Nazis surrendering, or etc).

Can you really say that the POW should be equally confident in both his probabilities? Surely in the second case, the POW having never been rescued by Allies towards the end of WWII before some more unconfidence is warranted than in the first case?

And if not, how should he adjust his 50% number in the second case?

Think of it this way: Imagine we live in a classical deterministic world. Then the *actual* probability of a fair coin flip coming out one way is actually 100%. Using physics, we can work out exactly what will happen.

But, the probabilities need not reflect the determinism of the nature. They are a reflection of our own information. Given no other information about where and under what conditions the coin will be flipped except that it is fair, we can be only 50% certain of any of the outcomes, even though the “actual” probability is 100% for one of the outcomes, and of course 0 for the other.

Actually, if I understand chaos theory, even in a classical deterministic world, using physics we could not work out exactly what would happen – because changes in the starting position that are too small for us to measure affect the overall result. All real measuring instruments are inaccurate.

Also, Sebastian, I think you miss the point of my comment just here. Let’s take your case where you say “except that it is fair” – how do we know that the coin is fair? In the real world we don’t get told this stuff like we do in statistics tests. In my hypothetical example I reflected this part of reality, by saying that the POW had tossed the coin 10,000 times and his best estimate based on that history was that the coin was fair. He could have worked out that the odds of a heads next was 60%, say 6,000 of the tosses came up heads, ie the coin was biased, and have more basis for confidence in the 60% probability estimate than in his estimate of the probability of being freed by Christmas. A new piece of information could have led him to increase his probability of being freed by Christmas to 75%, and I would still say he should be more confident about his 60% probability estimate than his free-by-Christmas estimate.

You are correct about chaos theory meaning small errors in measurements make it difficult to predict the future. However, in principle, if we have a deterministic universe there are no probabilities in nature itself.

As you also point out, you cannot be sure in reality that the coin is fair or not fair. Intuitively, I can see where you are going with this. I suppose you could treat your probability as a parameter of the system and then make an estimate of it, and you would also get some kind of a probability that the probability is what you are saying. This could work formally. From this perspective, the probability one gives in the end is then the average probability. I wonder if there is a case to be made to use the maximum likelihood probability instead.

The problem is that you have no frame to define your 50%. I mean, 50% of what?

If you were to say there are 1000 POWs in the war, and 500 of them were rescued in the advance, then that means that there is a 50% probability that he is one of the rescued POWs.

A 50% chance of being rescued, in and of itself, doesn’t mean anything without a context to frame it in.

But I am talking about forward-looking probabilities. The POW in my example can’t know the ratio of POWs freed by the Allied advance, as the Allied advance is still advancing, it hasn’t finished happening yet.

The POW will either be rescued before Christmas, or he won’t be (perhaps because he died from a bombing raid first). Now, if on a given moment on the 1st of March, the Allied soldiers had just arrived at the compound and the German guards were putting up their hands, the POW’s probability of his being rescued before Christmas is pretty high. If it’s 24 December and there’s no sign of any Allied forces in the vicinity, then I’d say the POW’s probability of being freed before Christmas is pretty low.

That’s the sort of information that a “probability of being freed before Christmas” is trying to capture. Are we in something more like the 1 March situation, or more like the 24 December? Somewhere in between my two extreme examples, there might be a point where the information the POW has (of which he is aware that some of it might not be true), indicates that he maybe has a 50% chance of being freed by Christmas, with of course a wide band of uncertainty about that 50% figure.

What you’re doing is taking a purely frequentist approach, while I’m taking an evidential approach.

One can still say that given a number of people in similar situations in the past, how many escaped, and build a probability out of that.

The larger point though is that statistics tell you about results, and not much about the process of how those results came about, be it random or otherwise. And they don’t apply to small sets of events, for the same reasons that you you can’t derive a probability from a small set of events; except in very trivial cases it just doesn’t manifest itself at that level.

Trying to divine the destiny of a very specific individual using statistics is like believing that a single coin flip must be heads because the coin is fair and the last 10 flips were tails.

My advice for our POW friend is to create a set of plans for dealing with both cases, and not worry too much about the probability of either or other things outside his control.

Mike, I don’t think statistics tell us that much about results, by themselves, without an understanding of the process that generated the results. Which is part of my criticism of the SIA.

I don’t however understand what you are saying about “divining the destiny of a specific individual…”. I’m guessing by divining the destiny of a specific individual you mean the POW is guessing about the probability of him being rescued before Christmas. How is his guess like the gambler’s fallacy? He’s not saying that “because I wasn’t rescued before Christmas last year, I will be rescued before Christmas this year” so I don’t see the connection. Can you please explain what the connection you see is?

Whether our hypothetical POW is better off ignoring the odds or not strikes me as a matter of psychology, not statistics.

You know the law of large numbers? It says that as the sample size becomes larger the probability becomes more stable. The obvious corollary to that is that as the sample size becomes smaller the probability becomes more volatile. This is the essence of the Gambler’s fallacy.

If we want to think about all of the POWs as a whole, and come up with a strategy that will on aggregate benefit them, then by all means use statistics. However if you don’t care about the aggregate results, that strategy might not make a whole lot of sense from the individual perspective.

Classic example is the lottery. The odds are low that you’ll win, and on aggregate it would be a lot smarter for everybody to save their money instead of tossing it away playing it. However from the individual perspective the costs of playing the lottery are distributed, while the benefits are concentrated. This makes the cost:benefit ratio extremely attractive for an individual player even though it is horrible for the group as a whole.

So what I’m saying is that while the probabilities might usefully inform the strategies of a General of the army, it is not so interesting for the actual POW; there are better conceptual tools out there for the individual to make decisions with.

As for process vs results. Maybe the easiest way to think about it is in the case of having a box half of red balls and half black. When we say that there is a 50% chance of picking a red ball, we are not saying that there is a 50% chance of a black ball being a red ball.

The 50% applies not to the individual balls, but to the information we have about the group of balls. It tells us nothing about how the red balls became red balls, or even why half of the balls are red, just that they are. And it is certainly not implying that the balls only become red or black once we pick them.

I think the time thing might be throwing you off? That the actual results are only available in the future doesn’t mean anything other then that we don’t have access to the information. If they had already happened in the past, but you didn’t know about it, it’d be the same thing.

Mike, thanks for explaining what you mean by the Gambler’s Fallacy. I still don’t know how you think this applies to my POW. I have not specified that the POW will do anything, or not do anything, based on his guess at the probability of being released before Christmas, so all your comments about strategies, cost-benefit ratios and decision-making tools strike me as irrelevant.

As for your example of the box half full of red balls and half of black, you are starting with the situation where you know something about the process, not merely the results.

Let’s say I have no idea what is in the box and no way of identifying what’s in the box apart from drawing individual items out. I draw 10 items from the box. All of them are black balls. This could be driven by the following situations:

1. 100% of the items in the box are black balls.

2. 50% of the items in the box are black balls, 50% are red balls, they are evenly mixed and by pure change I have randomly drawn 10 black balls.

3. 50% of the items in the box are black balls, 50% are red balls, but the black balls are on top of the red balls so it’s unrandom that I have drawn 10 black balls.

4. 1 item in the box is a red ball, there were 99 other items which were all black balls, so it’s not surprising that I’ve drawn only black balls.

5. 100% of the items in the box are red balls that turn black when exposed to daylight (in which case the balls do become black once we pick them, contrary to your assumptions).

And there could be an infinite number of other results driving the 10 black balls draw, eg only 2 red balls in the box and the rest black balls, 2 apples and the rest black balls, etc. This is why I say that statistics by themselves don’t tell us much about results.

As for the time thing, its not throwing me off. As I said, I was talking about evidential probabilities, not frequentist ones. The reasons why we can’t know past probabilities are irrelevant. Please stop assuming I’m an idiot.

I do not think you are an idiot. That you’re interested in talking about this is evidence for me that you are not.

Tracy W, I found a good explanation of this on lesswrong.com, in two comments on a thread about saying “I don’t know”:

HalFinney:

Is it reasonable to distinguish between probabilities we are sure of, and probabilities we are unsure of? We know the probability that rolling a die will get a 6 is 1/6, and feel confident about that. But what is the probability of ancient life on Mars? Maybe 1/6 is a reasonable guess for that. But our probabilistic estimates feel very different in the two cases. We are much more tempted to say “I don’t know” in the second case. Is this a legitimate distinction in Bayesian terms, or just an illusion?

Eliezer_Yudkowsky:

Hal, you have to bet at scalar odds. You’ve got to use a scalar quantity to weight the force of your subjective anticipations, and their associated utilities. Giving *just* the probability, just the betting odds, just the degree of subjective anticipation, does throw away information. More than one set of possible worlds, more than one set of admissible hypotheses, more than one sequence of observable evidence, can yield the final summarized judgment that a certain probability is 1/6.

The amount of previously observed evidence can determine how easy it is for additional evidence to shift our beliefs, which in turn determines the expected utility of looking for more information. I think this is what you’re looking for.

But when you have to actually bet, you still bet at 1:5 odds. If that sounds strange to you, look up “ambiguity aversion” – considered a bias – as demonstrated at e.g. http://en.wikipedia.org/wiki/Ellsberg_paradox

PS: Personally I’d bet a lot lower than 1/6 on ancient Mars life. And Tom, you’re right that 0 is a safer estimate than 10, but so is 9, and I was assuming the tree was known to be an apple tree in bloom.

http://lesswrong.com/lw/gs/i_dont_know/dpx

http://lesswrong.com/lw/gs/i_dont_know/dpy

So Eliezer is saying roughly what I am, our degree of confidence about a probability estimate can rationally vary.

Confidence, yes; but not the precision.

Pingback: SIA on other minds « Meteuphoric

Pingback: Our Place in the Universe at Steven Landsburg | The Big Questions: Tackling the Problems of Philosophy with Ideas from Mathematics, Economics, and Physics

Pingback: NEWS FLASH: multiverse theory proven right « Robert Wiblin

My post countering the argument can be found at:

http://lesswrong.com/lw/1zj/sia_wont_doom_you/

Pingback: Why does the most important problem go so ignored? « Robert Wiblin

this is an interesting thought experiment, nothing more (why do really smart people so consistently mistake what’s basically idle speculation for real analysis?). as others have noted, the number of completely unsupported assumptions is obvious, and they do all the work. this is like a chimp musing about a nuclear reaktor based on the perception of heat, nothing more. we really know next to nothing about biology, evolution (how life began, not how it evolved), physics, cosmology. anyone who says different is ignoring the paradigms routinely shattered, revealed to be off in important ways, etc.

Pingback: To SIA or not to SIA? « Robert Wiblin

I think you ar right, but great filter may be that “they ” are here, but we do no see them.

Pingback: Self-Indication? « H+ Lund

Another question could be how long the gap between each filter is. If most filters are in front of us, we want to know how far in front.

Using SIA it seems like the longer between the first and last civilisations passing the previous filter, the longer the amount of time before the next filter. Basically if civilisations reach our stage years apart then the universe will be most full of life if the first civilisation to reach this stage is still around when the last civilisation reaches the stage and SIA would suggest that our universe is therefore of this form.

Of course, this would also need to be balanced by the fact that some civilisations would pass the next filter and greatly increase in population. But if we believe this rate of success is high then the doomsday argument goes away anyway, so we can presume it’s fairly low. This still means the result would fail to hold if the increase in population more than unbalanced the decrease in surviving civiliations in which case the opposite result would hold – we can assume a short gap between the previous filter and the next.

We also need to take into account the fact that moving from the previous stage to this stage may have decreased observer numbers overall (ie. population increase doesn’t balance failure rates). In that case, we are more likely to be one of the first groups to reach our stage as observes numbers will be maximum at that point. But that already negates the force of the doomsday arguments as Fermi’s Paradox is obvious as a result of our primacy.

Pingback: Wrong questions and hidden conditioning « N=1

Intersting persepective – we at the moment are limited by our science, in the future it maybe possible to over come the age people can life to, if we can increase science knowledge to allow people to live to allmost infinate – the speed in which things can travel at become less important, provided resources could be used to support the travel. If I had the life spand of x and it takes 2x to get to a place well its not possible, now lets say I live for Y years and 2x is smaller then y it becomes possible, hence in the future Speed of light may not be a barrier;

Mark, speed of light is already not a barrier for the traveller. Given an arbitrary large amount of energy one can get into any place in the universe in what seems to them to be an arbitrarily short amount of time. However, no one will ever actually see the traveller moving faster than the speed of light and covering a distance greater than that given by the speed of light barrier. This is the paradox of special relativity and is resolved by the idea of a different speed of the passage of time. For the traveller the time passes very quickly – what seems like one second to him seems to an observer to be a much longer period of time (exactly on how much longer depends on their relative velocity).

So the place we can get to is not so much limited by our lifespan but more by our inability to generate sufficient amounts of energy to accelerate ourselves to speeds close to the speed of light relative to the Earth’s rest frame. And of course another problem is that even if we WERE able to generate sufficient amount of energy our bodies likely would not survive the large acceleration.

Pingback: My plans | Meteuphoric

Pingback: Anthropic principles agree on bigger future filters | Meteuphoric

Pingback: Light cone eating AI explosions are not filters | Meteuphoric

Pingback: Overcoming Bias : Beware Future Filters

Pingback: Baloney.Com » Blog Archive » Katja Grace’s blog

One G acceleration for one year attains light speed, and since we evolved in and live in one G, any point in the universe is accessible (via relativity) to us in short time (years; a human lifetime frame) if we can only find or develop the power required to sustain one G acceleration for around a year.

As for the mathematical argument of Katja’s OP, I agree with those pointing at the extreme short-sighted sophistry of mistaking present statistical assumptions with objective reality. Depending on the initial assumptions, we could either be at the beginning of a long and grand expanding future or we could be near a great filter, just as the Drake Equation provides for either millions of other civilizations or just one (ours) depending on the initial values assumed for the variables in the equation.

At best, arguments like the OP’s are good mental exercise. But at worst, they’re unrecognized mathematical sleight-of-hand parlor tricks.

Which present statistical assumptions do you suppose I am confusing with objective reality?

Many. Here’s just a few:

1) that lack of present evidence for extraterrestrial life/civilizations is = lack of extraterrestrial life/civilizations

2) that humanity is presently confined to this planet and/or has been in the past or the future

3) that a 6 billion population count constitutes a “large” number (“large” is subjective and relative)

4) that any future filtering precludes any or all bright envisioned futures

There are many logical problems with assuming that such probabilistic arguments possessing so very many unknown variables actually pertain to objective reality except in a purely imaginative kind of way. Such arguments are good mental exercises but that’s about all.

http://en.wikipedia.org/wiki/Doomsday_argument

I agree with SIA, but whether I’m a Thirder or Halfer in the SBP depends on the specific method of scoring my answer(s). Am I getting a mark everytime I answer, or only everytime the coin is flipped? The disagreement about the SBP is just confusion.

In regards to filters, yes, it’s trivial to point out that future filters are more extreme than past filters. Filter Rarity corresponds to Filter Severity. The longer we survive for, the more likely we are to see rarer/severer filters.

That said, the intesting part is whether we will be filtered, or not.

I say we won’t ever be filtered because a permanent civilization has Infinitely more “Measure” than any finite number of temporary/doomed civilizations.

“There are many more possible ways to die tomorrow than there are possible ways to die today.” -C. T. Turner.

Pingback: Overcoming Bias : What Shows Smaller Future Filter?

Pingback: SIA > SSA, part 4: In defense of the presumptuous philosopher – Hands and Cities

Pingback: Replicating and extending the grabby aliens model – Center on Long-Term Risk